Tag: charting

-

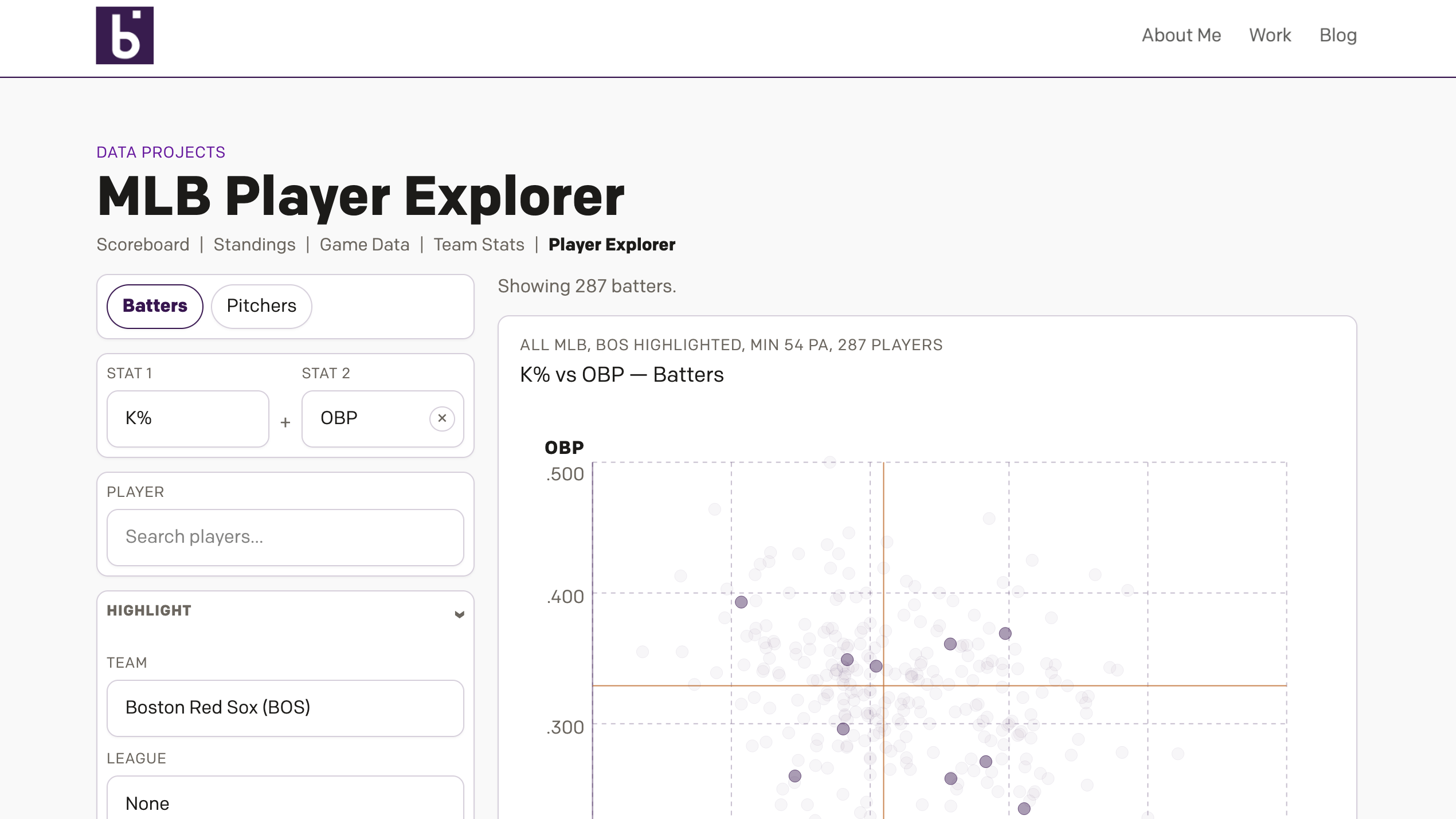

Revenge of the Nerds

This past weekend I thought I would be writing about something else, and perhaps I still will later this week, but for now we turn to the Boston Red Sox firing Alex Cora, their manager; Jason Varitek, beloved Sox icon and in the dugout as game planning and run prevention coach; Ramon Vazquez, bench coach;…

-

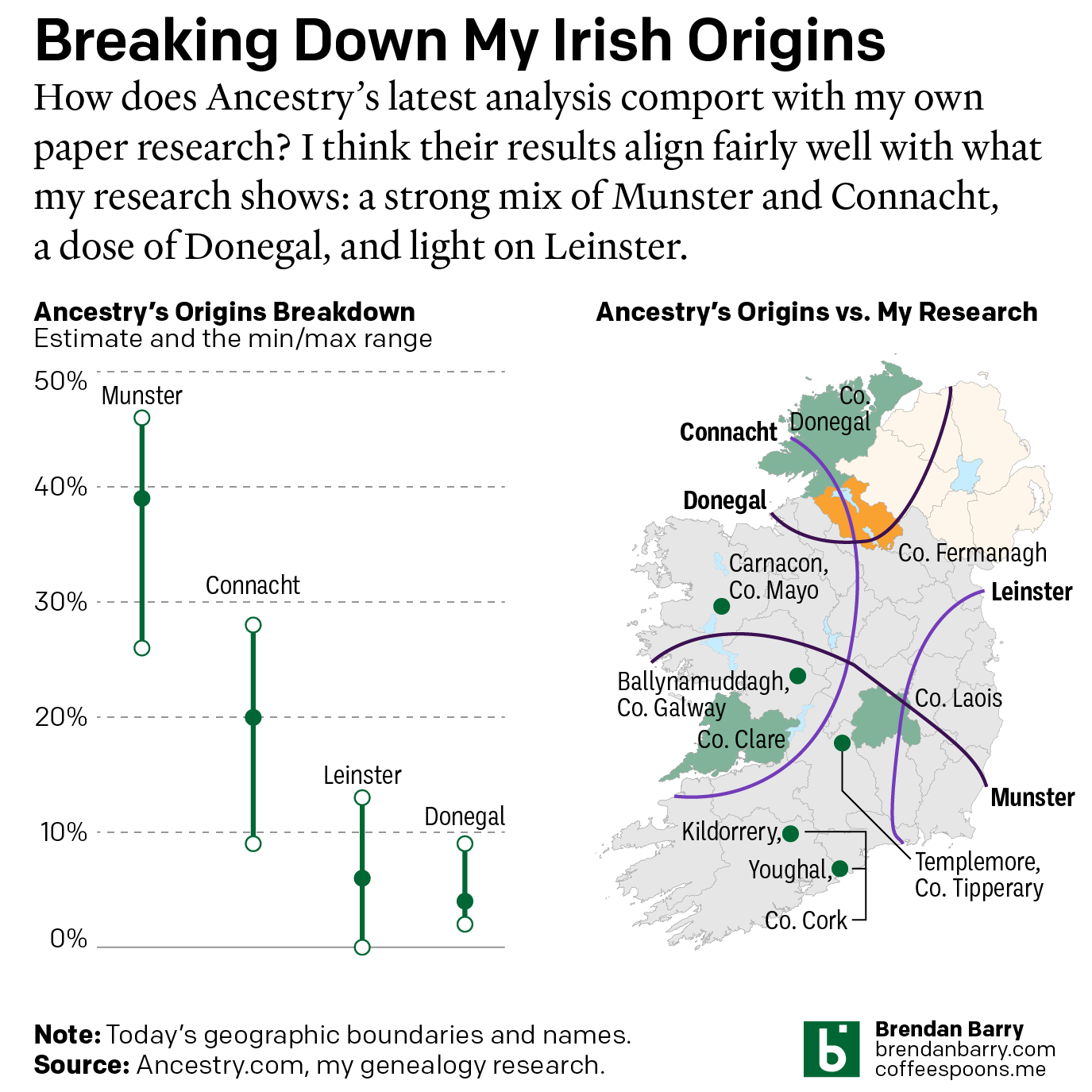

Still Irish

Last October Ancestry.com updated their ethnic origins breakdowns. Longtime readers will know these are not the most useful tools for helping one in their genealogical research. But, if they garner interest in one’s family history and motivate people to explore their own pasts, more power to them. I only encourage those people to dig a…

-

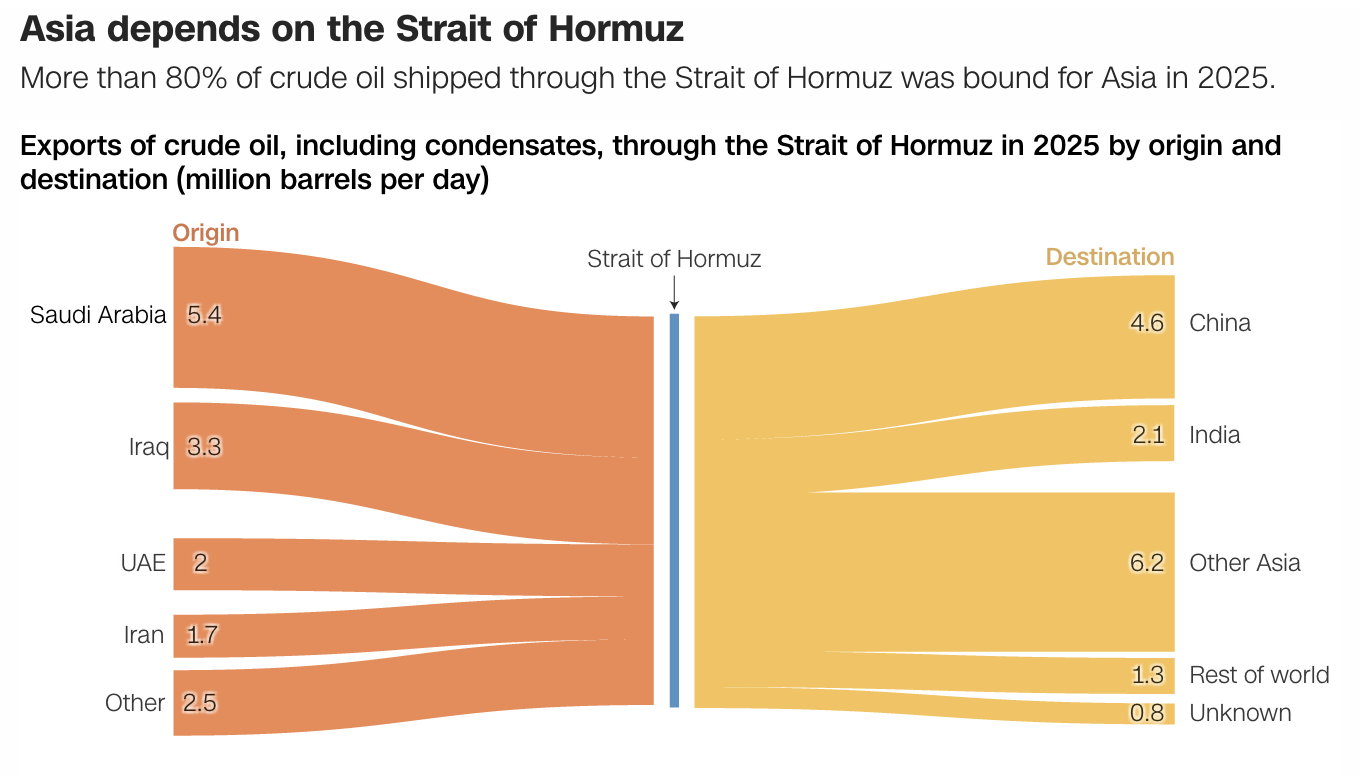

Mission Accomplished

Last weekend the United States and Israel preemptively struck Iran and kicked off a regional war. As I type this Monday morning, the US–Israeli strike forced assassinated the ayatollah and numerous other senior Iranian officials—but this seems to have been anticipated to a degree and the regime quickly retaliated and has delegated roles and responsibilities.…

-

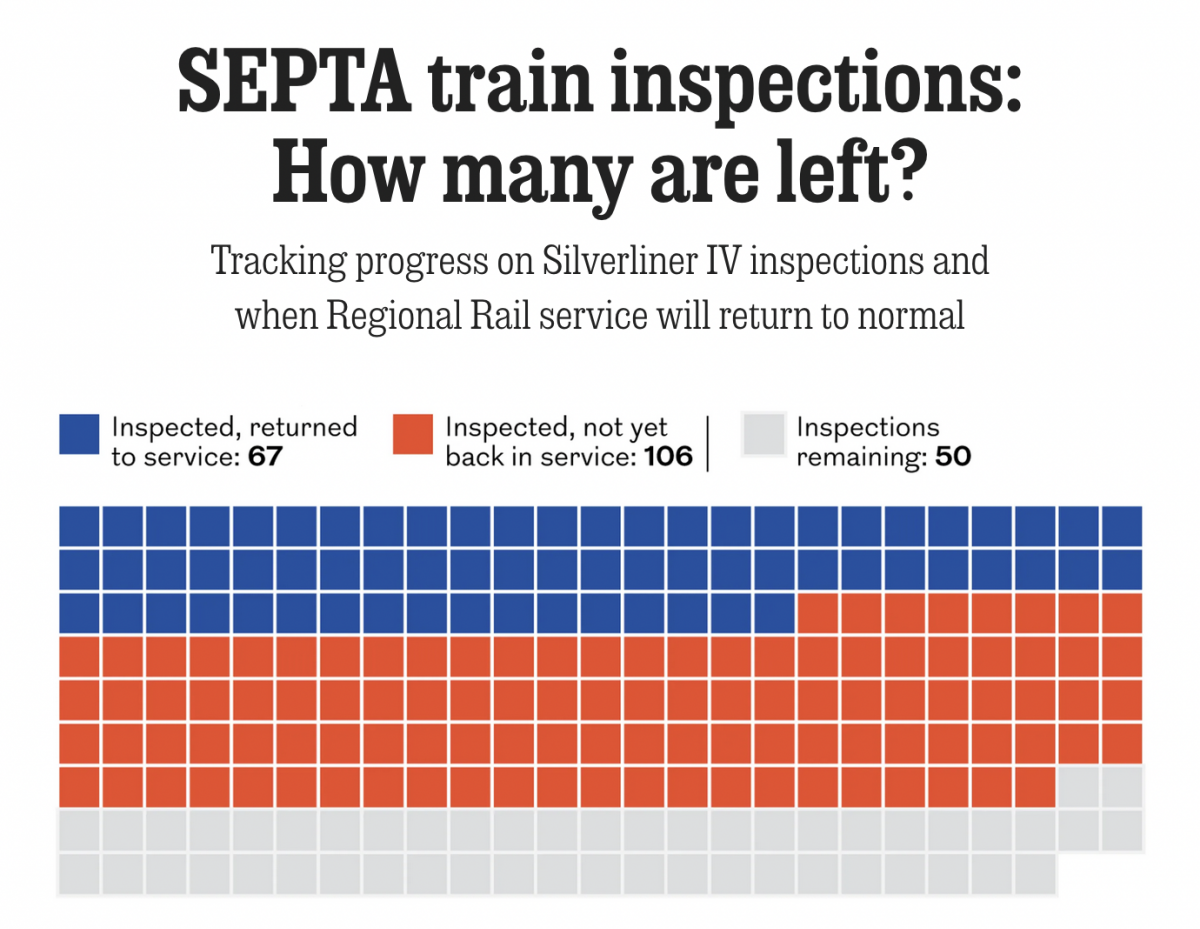

Tarnished Linings

Last month the National Transportation Safety Board (NTSB) ordered Philadelphia’s public transit system, SEPTA, to inspect the backbone of its commuter rail service, Regional Rail: all 225 Silverliner IV railcars. The Silverliner IV fleet, aged over 50 years, suffered a series of fires this summer and the NTSB investigators wanted them inspected by the end…

-

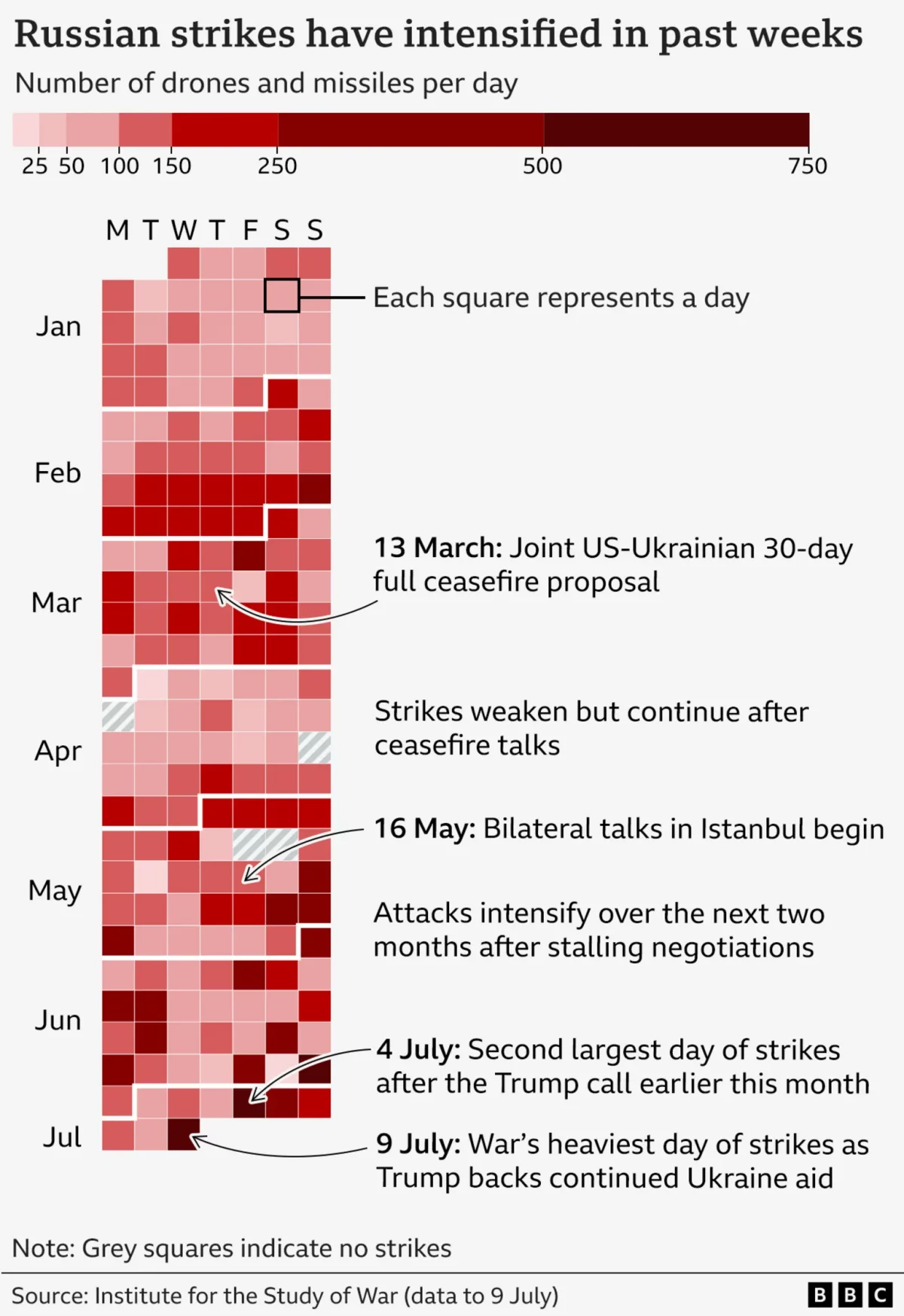

It’s Raining Drones

Last Friday the BBC published an article about the US’ resumption of supplying military assistance to Ukraine in its defence of Russia’s invasion. But in that article, the author referenced the increased intensity of Russian drone and missile strikes on Ukraine over that week. To show the intensity, the BBC included this graphic, which incorporates…

-

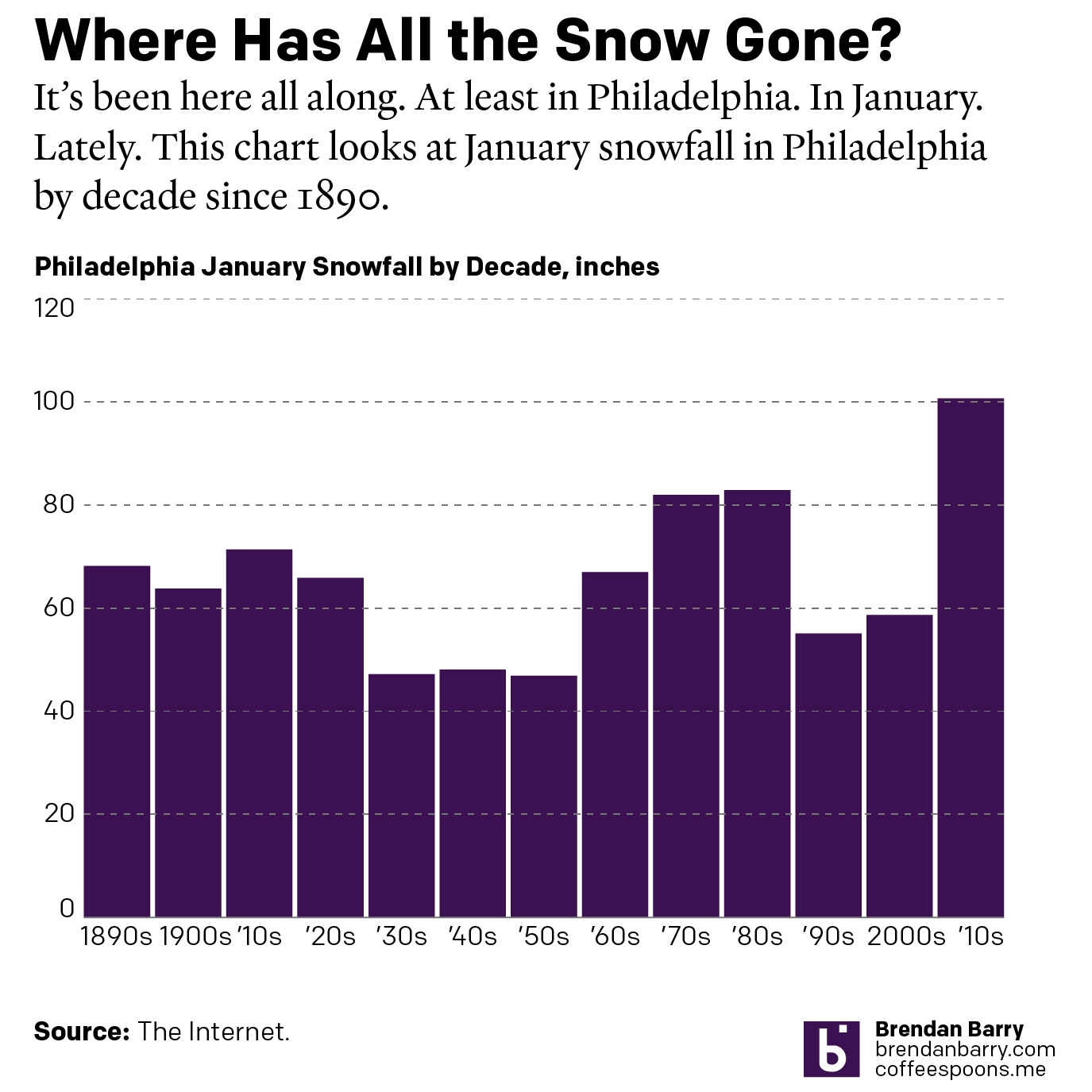

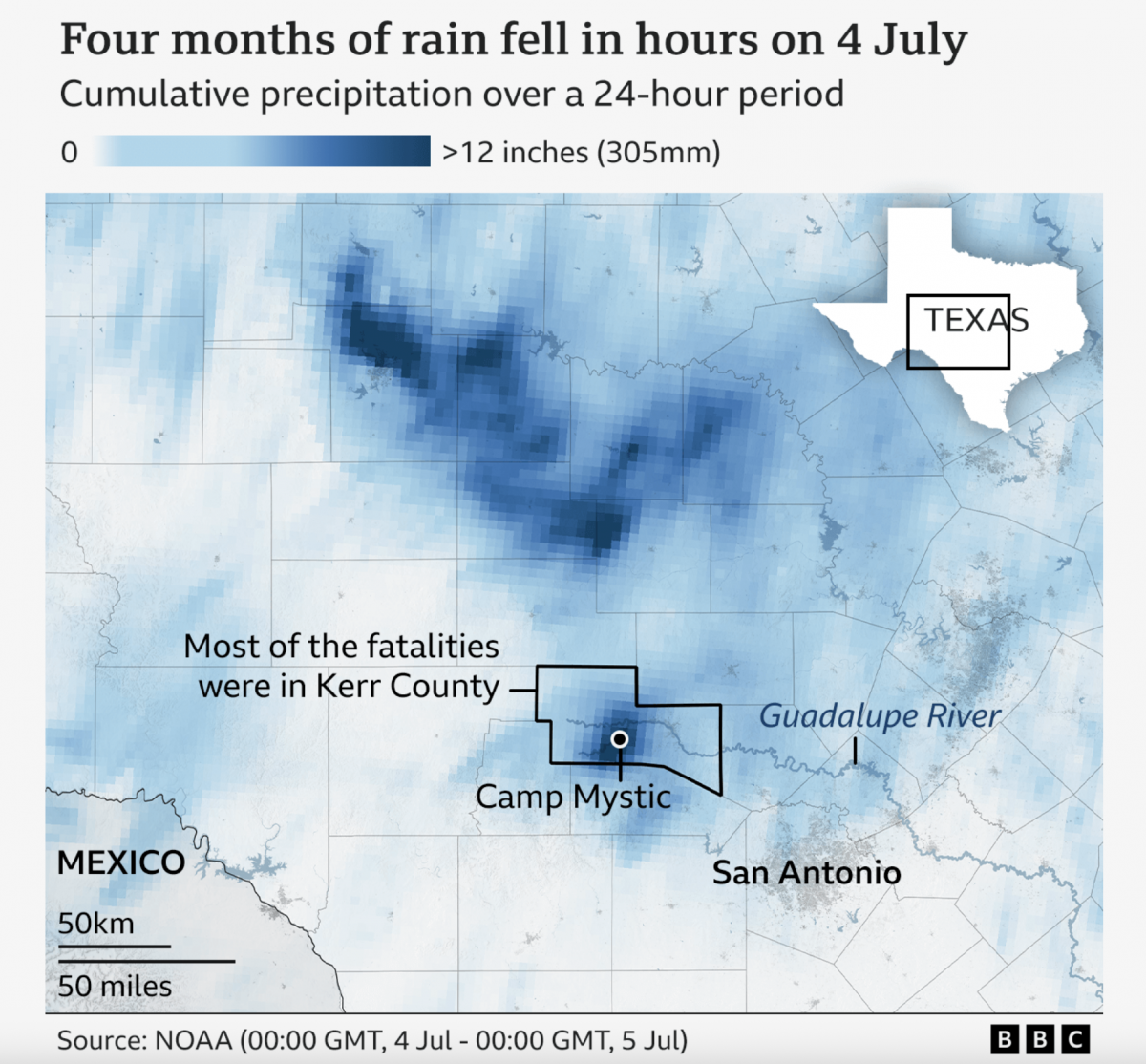

A Warming Climate Floods All Rivers

Last weekend, the United States’ 4th of July holiday weekend, the remnants of a tropical system inundated a central Texas river valley with months’ worth of rain in just a few short hours. The result? The tragic loss of over 100 lives (and authorities are still searching for missing people). Debate rages about why the…